Designing AI that knows when not to act

Designed an intervention system for motorcyclists that improves safety by controlling when and how the system intervenes.

Context

Motorcycling is a high-risk, attention-saturated activity.

Riders continuously process:

- speed

- road conditions

- navigation

- risk

Most rider technologies fail by:

- increasing visual load

- interrupting at the wrong time

After experiencing a motorcycle accident, I reframed the problem:

Not how to add information,

but how to intervene without adding cognitive load.

The problem

This was not an interface problem.

It was human–AI coordination under constraint:

- attention already saturated

- reaction time critical

- interruptions risky

The core challenge:

How does a system help without competing for attention?

The shift

I reframed the system from information delivery to intervention control.

From:

- more signals → fewer, higher-value signals

- constant assistance → selective intervention

- visibility → timing

In safety-critical systems:

Value comes from when the system acts, not how much it shows.

The system

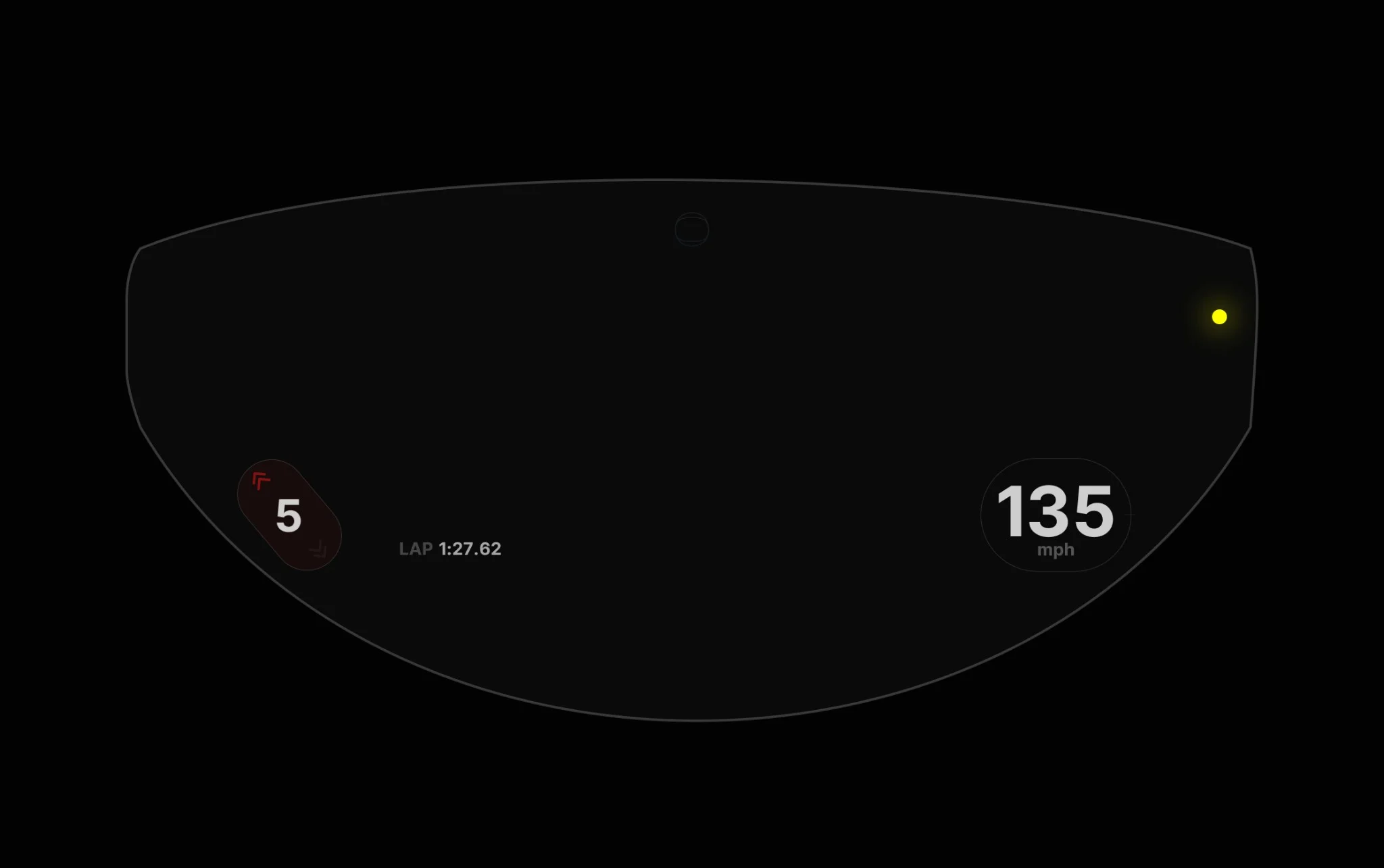

Gia operates as a real-time intervention system:

- Observes continuously

- Predicts high-risk moments

- Intervenes selectively

- Stays silent when confidence is low

The system prioritizes what not to surface.

Design & prototyping

I focused on identifying when riders have cognitive availability versus overload.

This included:

- mapping high-risk vs low-attention moments

- testing voice timing under real riding constraints

- evaluating when intervention improves vs disrupts

Every decision was evaluated against:

Does this reduce cognitive burden in motion?

Voice

Voice was the primary interface.

When eyes and hands are occupied, spoken cues preserve situational awareness.

- short

- contextual

- timed to moments of availability

This was not conversational AI.

It was controlled intervention.

Design decisions

-

Selective intervention over constant feedback

The system only surfaces high-value signals.

Result: higher trust and lower cognitive load.

-

Haptics and voice over visual interfaces

Reduced reliance on visual attention.

Result: safer interaction under motion.

-

Timing over information density

Optimized when signals appear, not how many.

Result: improved reaction and reduced distraction.

-

Behavioral adaptation over configuration

System learns from rider behavior instead of setup.

Result: lower friction and more natural integration.

-

Explicit failure states over false confidence

Defined how the system behaves under uncertainty.

Result: increased reliability and trust.

Tradeoffs

Designing for safety required restraint:

- Less information vs safer interaction

- Fewer features vs higher trust

- Conservative outputs vs perceived intelligence

We prioritized reliability over capability.

Impact

Gia demonstrates a viable model for AI in attention-constrained environments:

- Reduced reliance on visual interfaces

- Higher trust through predictable intervention

- Improved signal-to-noise ratio

- Better alignment between system behavior and rider expectations

The key validation:

AI effectiveness depends on restraint and timing, not capability.

Reflection

Gia changed how I think about AI systems.

The challenge is not intelligence.

It is control.

Designing when a system should act, remain silent, or defer requires defining boundaries, not features.

Key takeaways:

- attention is the primary constraint

- timing matters more than capability

- trust comes from restraint